定义

一个随机变量 X X X 期望 (expectation, or mean)定义为

E [ X ] = ∑ x x ⋅ p X ( x ) \mathbb{E}[X] = \sum_{x} x \cdot p_X(x)

E [ X ] = x ∑ x ⋅ p X ( x )

其中 p X p_X p X X X X pmf )。

E [ X ] \mathbb{E}[X] E [ X ] ∞ \infty ∞ E [ X ] < ∞ \mathbb{E}[X] < \infty E [ X ] < ∞ 绝对收敛 (absolute convergence)。一些反例:

圣彼得堡悖论 :p X ( 2 k ) = 2 − k p_X(2^{k}) = 2^{-k} p X ( 2 k ) = 2 − k X ∈ Z \ { 0 } , p X ( k ) = 1 a k 2 , a = π 2 3 X \in \Z \backslash\left\lbrace 0 \right\rbrace,\, p_X(k) = \dfrac{1}{ak^2},\, a = \dfrac{\pi^2}{3} X ∈ Z \ { 0 } , p X ( k ) = a k 2 1 , a = 3 π 2

性质

指示随机变量

对于以 p p p X ∈ { 0 , 1 } X \in \left\lbrace 0, 1 \right\rbrace X ∈ { 0 , 1 }

E [ X ] = 0 ⋅ ( 1 − p ) + 1 ⋅ p = p \mathbb{E}[X] = 0 \cdot (1-p) + 1 \cdot p = p

E [ X ] = 0 ⋅ ( 1 − p ) + 1 ⋅ p = p

对于事件 A A A X = I ( A ) X = I(A) X = I ( A )

E [ X ] = 0 ⋅ Pr ( A c ) + 1 ⋅ Pr ( A ) = Pr ( A ) \mathbb{E}[X] = 0 \cdot \Pr(A^c) + 1 \cdot \Pr(A) = \Pr(A)

E [ X ] = 0 ⋅ Pr ( A c ) + 1 ⋅ Pr ( A ) = Pr ( A )

Law Of The Unconscious Statistician (LOTUS)

LOTUS

对于 f : R → R f\colon \R \to \R f : R → R X X X X = ( X 1 , … , X n ) \bm{X} = (X_1, \dots, X_n) X = ( X 1 , … , X n )

E [ f ( X ) ] = ∑ x f ( x ) p X ( x ) \mathbb{E}[f(X)] = \sum_x f(x)p_X(x) E [ f ( X )] = ∑ x f ( x ) p X ( x ) E [ f ( X ) ] = ∑ x f ( x ) p X ( x ) = ∑ ( x 1 , … , x n ) f ( x 1 , … , x n ) p X ( x 1 , … , x n ) \mathbb{E}[f(\bm{X})] = \sum_{\bm{x}} f(\bm{x})p_{\bm{X}}(\bm{x}) = \sum\limits_{(x_1, \dots, x_n)}f(x_1, \dots, x_n)p_{\bm{X}}(x_1, \dots, x_n) E [ f ( X )] = ∑ x f ( x ) p X ( x ) = ( x 1 , … , x n ) ∑ f ( x 1 , … , x n ) p X ( x 1 , … , x n )

证明

令 Y = f ( X 1 , … , X n ) Y = f(X_1, \dots, X_n) Y = f ( X 1 , … , X n )

E [ f ( X 1 , … , X n ) ] = ∑ y y Pr ( Y = y ) = ∑ y y ∑ ( x 1 , … , x n ) ∈ f − 1 ( y ) Pr [ ( X 1 , … , X n ) = ( x 1 , … , x n ) ] = ∑ ( x 1 , … , x n ) f ( x 1 , … , x n ) Pr [ ( X 1 , … , X n ) = ( x 1 , … , x n ) ] = ∑ ( x 1 , … , x n ) f ( x 1 , … , x n ) p X ( x 1 , … , x n ) \begin{aligned}

\mathbb{E}[f(X_1, \dots, X_n)] &= \sum_y y \Pr(Y = y) \\

&= \sum_y y\sum_{(x_1, \dots, x_n) \in f^{-1}(y)} \Pr[(X_1, \dots, X_n) = (x_1, \dots, x_n)] \\

&= \sum_{(x_1, \dots, x_n)} f(x_1, \dots, x_n) \Pr[(X_1, \dots, X_n) = (x_1, \dots, x_n)] \\

&= \sum_{(x_1, \dots, x_n)} f(x_1, \dots, x_n) p_{\bm{X}}(x_1, \dots, x_n)

\end{aligned}

E [ f ( X 1 , … , X n )] = y ∑ y Pr ( Y = y ) = y ∑ y ( x 1 , … , x n ) ∈ f − 1 ( y ) ∑ Pr [( X 1 , … , X n ) = ( x 1 , … , x n )] = ( x 1 , … , x n ) ∑ f ( x 1 , … , x n ) Pr [( X 1 , … , X n ) = ( x 1 , … , x n )] = ( x 1 , … , x n ) ∑ f ( x 1 , … , x n ) p X ( x 1 , … , x n )

期望的线性性质

对于 a , b ∈ R a, b \in \R a , b ∈ R X , Y X, Y X , Y

E [ a X + b ] = a E [ X ] + b \mathbb{E}[aX + b] = a\mathbb{E}[X] + b E [ a X + b ] = a E [ X ] + b E [ X + Y ] = E [ X ] + E [ Y ] \mathbb{E}[X + Y] = \mathbb{E}[X] + \mathbb{E}[Y] E [ X + Y ] = E [ X ] + E [ Y ]

对于在随机变量 X 1 , … , X n X_1, \dots, X_n X 1 , … , X n 线性(仿射) (linear (affine))函数 f f f

E [ f ( X 1 , … , X n ) ] = f ( E [ X 1 ] , … , E [ X n ] ) \mathbb{E}[f(X_1, \dots, X_n)] = f(\mathbb{E}[X_1], \dots, \mathbb{E}[X_n])

E [ f ( X 1 , … , X n )] = f ( E [ X 1 ] , … , E [ X n ])

对于任意相互不独立(dependent)的随机变量 X 1 , … , X n X_1, \dots, X_n X 1 , … , X n

证明

Proof 1.

E [ a X + b ] = ∑ x ( a x + b ) p X ( x ) = a ∑ x x p X ( x ) + b ∑ x p X ( x ) = a E [ X ] + b \begin{aligned}

\mathbb{E}[aX + b] &= \sum_x (ax + b)p_X(x) \\

&= a \sum_x x p_X(x) + b \sum_x p_X(x) \\

&= a \mathbb{E}[X] + b

\end{aligned}

E [ a X + b ] = x ∑ ( a x + b ) p X ( x ) = a x ∑ x p X ( x ) + b x ∑ p X ( x ) = a E [ X ] + b

Proof 2.

E [ X + Y ] = ∑ x , y ( x + y ) Pr [ ( X , Y ) = ( x , y ) ] = ∑ x x ∑ y Pr [ ( X , Y ) = ( x , y ) ] + ∑ y y ∑ x Pr [ ( X , Y ) = ( x , y ) ] = ∑ x x Pr [ X = x ] + ∑ y y Pr [ Y = y ] = ∑ x x p X ( x ) + ∑ y y p Y ( y ) = E [ X ] + E [ Y ] \begin{aligned}

\mathbb{E}[X + Y] &= \sum_{x, y} (x + y)\Pr[(X, Y) = (x, y)] \\

&= \sum_x x \sum_y \Pr[(X, Y) = (x, y)] + \sum_y y \sum_x \Pr[(X, Y) = (x, y)] \\

&= \sum_x x \Pr[X = x] + \sum_y y \Pr[Y = y] \\

&= \sum_x x p_X(x) + \sum_y y p_Y(y) \\

&= \mathbb{E}[X] + \mathbb{E}[Y]

\end{aligned}

E [ X + Y ] = x , y ∑ ( x + y ) Pr [( X , Y ) = ( x , y )] = x ∑ x y ∑ Pr [( X , Y ) = ( x , y )] + y ∑ y x ∑ Pr [( X , Y ) = ( x , y )] = x ∑ x Pr [ X = x ] + y ∑ y Pr [ Y = y ] = x ∑ x p X ( x ) + y ∑ y p Y ( y ) = E [ X ] + E [ Y ]

一些分布的期望

泊松分布

泊松分布 X ∼ Pois ( λ ) X \sim \operatorname{Pois}(\lambda) X ∼ Pois ( λ )

E [ X ] = λ \mathbb{E}[X] = \lambda

E [ X ] = λ

证明

E [ X ] = ∑ k ⩾ 0 k e − λ λ k k ! = ∑ k ⩾ 1 e − λ λ k ( k − 1 ) ! = ∑ k ⩾ 0 e − λ λ k + 1 k ! = λ ∑ k ⩾ 0 e − λ λ k k ! = λ \begin{aligned}

\mathbb{E}[X] &= \sum_{k\ge 0} k \dfrac{\e^{-\lambda}\lambda^{k}}{k!}\\

&= \sum_{k\ge 1} \dfrac{\e^{-\lambda}\lambda^{k}}{(k-1)!}\\

&= \sum_{k\ge 0} \dfrac{\e^{-\lambda}\lambda^{k+1}}{k!}\\

&= \lambda \sum_{k\ge 0} \dfrac{\e^{-\lambda}\lambda^{k}}{k!}\\

&= \lambda

\end{aligned}

E [ X ] = k ⩾ 0 ∑ k k ! e − λ λ k = k ⩾ 1 ∑ ( k − 1 )! e − λ λ k = k ⩾ 0 ∑ k ! e − λ λ k + 1 = λ k ⩾ 0 ∑ k ! e − λ λ k = λ

二项分布

对于二项随机变量 X ∼ Bin ( n , p ) X \sim \operatorname{Bin}(n, p) X ∼ Bin ( n , p )

E [ X ] = n p \mathbb{E}[X] = np

E [ X ] = n p

证明

E [ X ] = ∑ k = 0 n k ( n k ) p k ( 1 − p ) n − k \mathbb{E}[X] = \sum_{k=0}^{n} k \dbinom{n}{k}p^{k}(1-p)^{n-k}

E [ X ] = k = 0 ∑ n k ( k n ) p k ( 1 − p ) n − k

不过注意到 X ∼ Bin ( n , p ) X \sim \operatorname{Bin}(n, p) X ∼ Bin ( n , p ) X = X 1 + ⋯ + X n X = X_1 + \dots + X_n X = X 1 + ⋯ + X n X i X_i X i p p p

E [ X ] = E [ X 1 ] + ⋯ + E [ X n ] = n p \mathbb{E}[X] = \mathbb{E}[X_1] + \dots + \mathbb{E}[X_n] = np

E [ X ] = E [ X 1 ] + ⋯ + E [ X n ] = n p

几何分布

对于几何随机变量 X ∼ Geo ( p ) X \sim \operatorname{Geo}(p) X ∼ Geo ( p )

E [ X ] = 1 p \mathbb{E}[X] = \dfrac{1}{p}

E [ X ] = p 1

证明

E [ X ] = ∑ k ⩾ 1 k ( 1 − p ) k − 1 p \mathbb{E}[X] = \sum_{k\ge 1} k(1-p)^{k-1}p \\

E [ X ] = k ⩾ 1 ∑ k ( 1 − p ) k − 1 p

注意到 X ∼ Geo ( p ) X \sim \operatorname{Geo}(p) X ∼ Geo ( p ) X = ∑ k ⩾ 1 I k \displaystyle X = \sum_{k \ge 1}I_{k} X = k ⩾ 1 ∑ I k I k I_{k} I k 前 k − 1 k-1 k − 1 全部 失败 (indicate whether all of the first k − 1 k-1 k − 1

E [ X ] = ∑ k ⩾ 1 E [ I k ] = ∑ k ⩾ 1 ( 1 − p ) k − 1 = 1 p \begin{aligned}

\mathbb{E}[X] &= \sum_{k\ge 1} \mathbb{E}[I_{k}] \\

&= \sum_{k\ge 1}(1-p)^{k-1}\\

&= \dfrac{1}{p}

\end{aligned}

E [ X ] = k ⩾ 1 ∑ E [ I k ] = k ⩾ 1 ∑ ( 1 − p ) k − 1 = p 1

负二项分布

对于以 r , p r, p r , p X X X

E [ X ] = r 1 − p p \mathbb{E}[X] = r \dfrac{1-p}{p}

E [ X ] = r p 1 − p

证明

E [ X ] = ∑ k ⩾ 1 k ( k + r − 1 k ) ( 1 − p ) k p r \mathbb{E}[X] = \sum_{k\ge 1} k\dbinom{k+r-1}{k}(1-p)^{k}p^r

E [ X ] = k ⩾ 1 ∑ k ( k k + r − 1 ) ( 1 − p ) k p r

X X X ( X 1 − 1 ) + ⋯ + ( X r − 1 ) (X_1 - 1) + \dots + (X_r - 1) ( X 1 − 1 ) + ⋯ + ( X r − 1 ) X i X_i X i p p p

E [ X ] = E [ X 1 ] + ⋯ + E [ X r ] − r = r 1 − p p \mathbb{E}[X] = \mathbb{E}[X_1] + \dots + \mathbb{E}[X_r] - r = r \dfrac{1-p}{p}

E [ X ] = E [ X 1 ] + ⋯ + E [ X r ] − r = r p 1 − p

超几何分布

对于以 N , M , n N, M, n N , M , n X X X

E [ X ] = n M N \mathbb{E}[X] = n \dfrac{M}{N}

E [ X ] = n N M

证明

E [ X ] = ∑ k = 0 n k ( M k ) ( N − M n − k ) ( N n ) \mathbb{E}[X] = \sum_{k=0}^{n} k \dfrac{\binom{M}{k}\binom{N-M}{n-k}}{\binom{N}{n}}

E [ X ] = k = 0 ∑ n k ( n N ) ( k M ) ( n − k N − M )

每个红球(成功)以概率 ( N − 1 n − 1 ) ( N n ) = n N \dfrac{\binom{N-1}{n-1}}{\binom{N}{n}} = \dfrac{n}{N} ( n N ) ( n − 1 N − 1 ) = N n X = X 1 + ⋯ + X M X = X_1 + \dots + X_M X = X 1 + ⋯ + X M X i ∈ { 0 , 1 } X_i \in \left\lbrace 0, 1 \right\rbrace X i ∈ { 0 , 1 } 第 i i i ,于是

E [ X ] = E [ X 1 ] + ⋯ + E [ X M ] = n M N \mathbb{E}[X] = \mathbb{E}[X_1] + \dots + \mathbb{E}[X_M] = n \dfrac{M}{N}

E [ X ] = E [ X 1 ] + ⋯ + E [ X M ] = n N M

例子

模式匹配(Pattern Matching)

记 s = ( s 1 , … , s n ) ∈ Q n s = (s_1, \dots, s_n) \in Q^n s = ( s 1 , … , s n ) ∈ Q n n n n q q q Q Q Q

对于长度为 k k k pattern )π ∈ Q k \pi \in Q^{k} π ∈ Q k X X X π \pi π s s s

记 I i ∈ { 0 , 1 } I_i \in \left\lbrace 0, 1 \right\rbrace I i ∈ { 0 , 1 } 第 i i i π = ( s i , … , s i + k − 1 ) \pi = (s_i, \dots, s_{i+k-1}) π = ( s i , … , s i + k − 1 ) ,于是 X = ∑ i = 1 n − k + 1 I i \displaystyle X = \sum_{i=1}^{n-k+1}I_i X = i = 1 ∑ n − k + 1 I i

期望的线性性质给出

E [ X ] = ∑ i = 1 n − k + 1 E [ I i ] = ( n − k + 1 ) E [ I i ] = ( n − k + 1 ) q − k \begin{aligned}

\mathbb{E}[X] &= \sum_{i=1}^{n-k+1}\mathbb{E}[I_i] \\

&= (n-k+1)\mathbb{E}[I_i] \\

&= (n-k+1)q^{-k}

\end{aligned}

E [ X ] = i = 1 ∑ n − k + 1 E [ I i ] = ( n − k + 1 ) E [ I i ] = ( n − k + 1 ) q − k

字串匹配次数的期望与给定字串 π \pi π

但是期望的首次匹配成功的位置(expected time(position) for the first appearance)就可能与 π \pi π

假设有 n n n n n n

Balls-into-bins 模型:一个个地扔球,u.a.r.,使得 n n n

X X X n n n X i X_i X i i − 1 i-1 i − 1

X i X_i X i p i p_i p i p i = 1 − i − 1 n p_i = 1 - \dfrac{i-1}{n} p i = 1 − n i − 1 X = ∑ i = 1 n X i \displaystyle X = \sum_{i=1}^{n}X_i X = i = 1 ∑ n X i

E [ X ] = ∑ i = 1 n E [ X i ] = ∑ i = 1 n n n − i + 1 = n ∑ i = 1 n 1 i = n H ( n ) ≈ n ln n \begin{aligned}

\mathbb{E}[X] &= \sum_{i=1}^{n}\mathbb{E}[X_i] \\

&= \sum_{i=1}^{n} \dfrac{n}{n-i+1}\\

&= n \sum_{i=1}^{n} \dfrac{1}{i}\\

&= n H(n)\\

&\approx n \ln n

\end{aligned}

E [ X ] = i = 1 ∑ n E [ X i ] = i = 1 ∑ n n − i + 1 n = n i = 1 ∑ n i 1 = n H ( n ) ≈ n ln n

其中 H ( n ) H(n) H ( n ) n n n

双重计数法(Double Counting)

对于非负随机变量 X X X { 0 , 1 , … } \left\lbrace 0, 1, \dots \right\rbrace { 0 , 1 , … }

E [ X ] = ∑ k = 0 ∞ Pr [ X > k ] \mathbb{E}[X] = \sum_{k=0}^{\infty}\Pr[X > k]

E [ X ] = k = 0 ∑ ∞ Pr [ X > k ]

证明一(双重计数)

E [ X ] = ∑ x ⩾ 0 x Pr [ X = x ] = ∑ x ⩾ 0 ∑ k = 0 x − 1 Pr [ X = x ] = ∑ k ⩾ 0 ∑ x > k Pr [ X = x ] = ∑ k ⩾ 0 Pr [ X > k ] \begin{aligned}

\mathbb{E}[X] &= \sum_{x \ge 0} x \Pr[X = x]\\

&= \sum_{x\ge 0}\sum_{k=0}^{x-1}\Pr[X = x]\\

&= \sum_{k\ge 0}\sum_{x > k} \Pr[X = x]\\

&= \sum_{k\ge 0}\Pr[X > k]

\end{aligned}

E [ X ] = x ⩾ 0 ∑ x Pr [ X = x ] = x ⩾ 0 ∑ k = 0 ∑ x − 1 Pr [ X = x ] = k ⩾ 0 ∑ x > k ∑ Pr [ X = x ] = k ⩾ 0 ∑ Pr [ X > k ]

纵轴表示 x x x p X p_X p X x x x k k k

证明二(期望的线性性质)

令 I k ∈ { 0 , 1 } I_{k} \in \left\lbrace 0, 1 \right\rbrace I k ∈ { 0 , 1 } X > k X > k X > k X = ∑ k ⩾ 0 I k X = \sum_{k \ge 0}I_{k} X = ∑ k ⩾ 0 I k

E [ X ] = ∑ k ⩾ 0 E [ I k ] = ∑ k ⩾ 0 Pr [ X > k ] \begin{aligned}

\mathbb{E}[X] &= \sum_{k\ge 0} \mathbb{E}[I_{k}]\\

&= \sum_{k\ge 0} \Pr[X > k]

\end{aligned}

E [ X ] = k ⩾ 0 ∑ E [ I k ] = k ⩾ 0 ∑ Pr [ X > k ]

哈希表 (Hash table):全集 U U U n n n h : U → [ m ] h\colon U \to [m] h : U → [ m ] m m m

开放寻址 (open addressing):当哈希冲突 (hash collision)发生时,探测策略(probing strategy)为,当查找一个键值 x ∈ U x \in U x ∈ U i i i h ( x , i ) h(x, i) h ( x , i )

线性探测 (linear probing):h ( x , i ) = h ( x ) + i ( m o d m ) h(x, i) = h(x) + i \pmod m h ( x , i ) = h ( x ) + i ( mod m ) 二次探测 (quadratic probing):h ( x , i ) = h ( x ) + c 1 i + c 2 i 2 ( m o d m ) h(x, i) = h(x) + c_1i + c_2i^2 \pmod m h ( x , i ) = h ( x ) + c 1 i + c 2 i 2 ( mod m ) 双重哈希 (double hashing):h ( x , i ) = h 1 ( x ) + i h 2 ( x ) ( m o d m ) h(x, i) = h_1(x) + ih_2(x) \pmod m h ( x , i ) = h 1 ( x ) + i h 2 ( x ) ( mod m ) uniform hashing :h ( x , i ) = π ( i ) h(x, i) = \pi(i) h ( x , i ) = π ( i ) π \pi π [ m ] [m] [ m ] 随机排列 (random permutation)。是最理想的情况。

在一个负载因子 (load factor)为 α = n m \alpha = \dfrac{n}{m} α = m n 1 1 − α \dfrac{1}{1-\alpha} 1 − α 1

证明

假设 X X X A i A_i A i i i i

E [ X ] = ∑ k = 0 ∞ Pr [ X > k ] = 1 + ∑ k = 1 ∞ Pr [ X > k ] = 1 + ∑ k = 1 ∞ Pr ( ⋂ i = 1 k A i ) = 1 + ∑ k = 1 ∞ ∏ i = 1 k Pr ( A i ∣ ⋂ j < i A j ) (chain rule) = 1 + ∑ k = 1 ∞ ∏ i = 1 k n − i + 1 m − i + 1 ⩽ 1 + ∑ k = 1 ∞ ∏ i = 1 k n m = 1 + ∑ k = 1 ∞ α k = 1 1 − α \begin{aligned}

\mathbb{E}[X] &= \sum_{k=0}^{\infty} \Pr[X > k]\\

&= 1 + \sum_{k=1}^{\infty} \Pr[X > k]\\

&= 1 + \sum_{k=1}^{\infty} \Pr\left(\bigcap_{i=1}^{k} A_i\right)\\

&= 1 + \sum_{k=1}^{\infty} \prod_{i=1}^{k} \Pr\left(A_i \Biggm| \bigcap_{j < i} A_{j}\right)\quad \text{(chain rule)}\\

&= 1 + \sum_{k=1}^{\infty} \prod_{i=1}^{k} \dfrac{n-i+1}{m-i+1}\\

&\le 1 + \sum_{k=1}^{\infty}\prod_{i=1}^{k}\dfrac{n}{m}\\

&= 1 + \sum_{k=1}^{\infty}\alpha^{k}\\

&= \dfrac{1}{1-\alpha}

\end{aligned}

E [ X ] = k = 0 ∑ ∞ Pr [ X > k ] = 1 + k = 1 ∑ ∞ Pr [ X > k ] = 1 + k = 1 ∑ ∞ Pr ( i = 1 ⋂ k A i ) = 1 + k = 1 ∑ ∞ i = 1 ∏ k Pr ( A i j < i ⋂ A j ) (chain rule) = 1 + k = 1 ∑ ∞ i = 1 ∏ k m − i + 1 n − i + 1 ⩽ 1 + k = 1 ∑ ∞ i = 1 ∏ k m n = 1 + k = 1 ∑ ∞ α k = 1 − α 1

容斥原理

记 I ( A ) ∈ { 0 , 1 } I(A) \in \left\lbrace 0, 1 \right\rbrace I ( A ) ∈ { 0 , 1 } A A A

I ( A c ) = 1 − I ( A ) I(A^c) = 1 - I(A) I ( A c ) = 1 − I ( A ) I ( A ∩ B ) = I ( A ) ⋅ I ( B ) I(A \cap B) = I(A) \cdot I(B) I ( A ∩ B ) = I ( A ) ⋅ I ( B )

对于事件 A 1 , … , A n A_1, \dots, A_n A 1 , … , A n

I ( ⋃ i = 1 n A i ) = 1 − I ( ⋂ i = 1 n A i c ) = 1 − ∏ i = 1 n I ( A i c ) = 1 − ∏ i = 1 n ( 1 − I ( A i ) ) = 1 − ∑ S ⊆ [ n ] ( − 1 ) ∣ S ∣ ∏ i ∈ S I ( A i ) (二项式定理) = 1 − ∑ S ⊆ [ n ] ( − 1 ) ∣ S ∣ I ( ⋂ i ∈ S A i ) = ∑ ∅ ≠ S ⊆ [ n ] ( − 1 ) ∣ S ∣ − 1 I ( ⋂ i ∈ S A i ) (1 跟 ∅ 抵消了) \begin{aligned}

I\left( \bigcup_{i=1}^n A_i \right) &= 1 - I\left( \bigcap_{i=1}^n A_i^c \right)\\

&= 1 - \prod_{i=1}^n I(A_i^c)\\

&= 1 - \prod_{i=1}^n \left(1 - I(A_i)\right)\\

&= 1 - \sum_{S \subseteq [n]}(-1)^{\left| S \right|}\prod_{i \in S}I(A_i)\quad \text{(二项式定理)}\\

&= 1 - \sum_{S \subseteq [n]}(-1)^{\left| S \right|} I\left(\bigcap_{i \in S}A_i\right)\\

&= \sum_{\empty \ne S \subseteq [n]}(-1)^{\left| S \right| - 1}I\left(\bigcap_{i \in S}A_i\right)\quad \text{(1 跟 $\empty$ 抵消了)}

\end{aligned}

I ( i = 1 ⋃ n A i ) = 1 − I ( i = 1 ⋂ n A i c ) = 1 − i = 1 ∏ n I ( A i c ) = 1 − i = 1 ∏ n ( 1 − I ( A i ) ) = 1 − S ⊆ [ n ] ∑ ( − 1 ) ∣ S ∣ i ∈ S ∏ I ( A i ) (二项式定理) = 1 − S ⊆ [ n ] ∑ ( − 1 ) ∣ S ∣ I ( i ∈ S ⋂ A i ) = ∅ = S ⊆ [ n ] ∑ ( − 1 ) ∣ S ∣ − 1 I ( i ∈ S ⋂ A i ) ( 1 跟 ∅ 抵消了)

布尔不等式

对于事件 A 1 , … , A n A_1, \dots, A_n A 1 , … , A n

I ( ⋃ i = 1 n A i ) = 1 − ∏ i = 1 n ( 1 − I ( A i ) ) = ∑ k = 1 n ( − 1 ) k − 1 ∑ S ∈ ( [ n ] k ) I ( ⋂ i ∈ S A i ) \begin{aligned}

I\left( \bigcup_{i=1}^n A_i \right) &= 1 - \prod_{i=1}^n \left(1 - I(A_i)\right)\\

&= \sum_{k=1}^{n} (-1)^{k-1}\sum_{S \in \binom{[n]}{k}} I\left(\bigcap_{i \in S}A_i\right)\\

\end{aligned}

I ( i = 1 ⋃ n A i ) = 1 − i = 1 ∏ n ( 1 − I ( A i ) ) = k = 1 ∑ n ( − 1 ) k − 1 S ∈ ( k [ n ] ) ∑ I ( i ∈ S ⋂ A i )

定义随机变量 X k ≔ ( ∑ i = 1 n I ( A i ) k ) X_{k} \coloneqq \dbinom{\sum\limits_{i=1}^{n}I(A_i)}{k} X k : = ( k i = 1 ∑ n I ( A i ) )

X k = ∑ S ∈ ( [ n ] k ) ∏ i ∈ S I ( A i ) = ∑ S ∈ ( [ n ] k ) I ( ⋂ i ∈ S A i ) \begin{aligned}

X_{k} &= \sum_{S \in \binom{[n]}{k}} \prod_{i \in S} I(A_i)\\

&= \sum_{S \in \binom{[n]}{k}} I\left(\bigcap_{i \in S}A_i\right)\\

\end{aligned}

X k = S ∈ ( k [ n ] ) ∑ i ∈ S ∏ I ( A i ) = S ∈ ( k [ n ] ) ∑ I ( i ∈ S ⋂ A i )

X k X_k X k

对于单峰序列 X k X_{k} X k

∑ k ⩽ 2 t ( − 1 ) k − 1 X k ⩽ ∑ k = 1 n ( − 1 ) k − 1 X k ⩽ ∑ k ⩽ 2 t + 1 ( − 1 ) k − 1 X k \sum_{k \le 2t} (-1)^{k-1} X_{k} \le \sum_{k=1}^{n} (-1)^{k-1} X_{k} \le \sum_{k \le 2t+1} (-1)^{k-1} X_{k}

k ⩽ 2 t ∑ ( − 1 ) k − 1 X k ⩽ k = 1 ∑ n ( − 1 ) k − 1 X k ⩽ k ⩽ 2 t + 1 ∑ ( − 1 ) k − 1 X k

求期望则可得 Boolean-Bonferroni 不等式。

线性性质的局限性

对于无穷求和,线性性质不一定成立。

对于无穷随机变量 X 1 , X 2 , … X_1, X_2, \dots X 1 , X 2 , … E [ ∑ i = 1 ∞ X i ] = ∑ i = 1 ∞ E [ X i ] \displaystyle \mathbb{E}\left[\sum_{i=1}^{\infty}X_i\right] = \sum_{i=1}^{\infty} \mathbb{E}[X_i] E [ i = 1 ∑ ∞ X i ] = i = 1 ∑ ∞ E [ X i ] ∑ i = 1 ∞ E [ ∣ X i ∣ ] < ∞ \displaystyle \sum_{i=1}^{\infty} \mathbb{E}[|X_i|] < \infty i = 1 ∑ ∞ E [ ∣ X i ∣ ] < ∞

存在 E [ ∑ i = 1 ∞ X i ] < ∞ , ∑ i = 1 ∞ E [ X i ] < ∞ \displaystyle \mathbb{E}\left[\sum_{i=1}^{\infty}X_i\right] < \infty,\, \sum_{i=1}^{\infty} \mathbb{E}[X_i] < \infty E [ i = 1 ∑ ∞ X i ] < ∞ , i = 1 ∑ ∞ E [ X i ] < ∞ E [ ∑ i = 1 ∞ X i ] ≠ ∑ i = 1 ∞ E [ X i ] \displaystyle \mathbb{E}\left[\sum_{i=1}^{\infty}X_i\right] \ne \sum_{i=1}^{\infty} \mathbb{E}[X_i] E [ i = 1 ∑ ∞ X i ] = i = 1 ∑ ∞ E [ X i ]

反例:公平赌博游戏中采取亏损加仓投注策略 (martingale betting strategy )从 1 1 1 1 1 1 0 0 0

随机多个(N N N X 1 , … , X N X_1, \dots, X_N X 1 , … , X N

E [ ∑ i = 1 N X i ] = ? E [ N ] E [ X 1 ] \mathbb{E}\left[\sum_{i=1}^{N}X_i\right] \mathop{{=}\mathllap{?\,}} \mathbb{E}[N] \mathbb{E}[X_1]

E [ i = 1 ∑ N X i ] = ? E [ N ] E [ X 1 ]

令 { X n } n ⩾ 1 \left\lbrace X_n \right\rbrace_{n\ge 1} { X n } n ⩾ 1 N N N X n X_n X n E [ ∑ i = 1 N X i ] = E [ N ] E [ X 1 ] \mathbb{E}\left[ \displaystyle \sum_{i=1}^N X_i \right] = \mathbb{E}[N] \mathbb{E}[X_1] E [ i = 1 ∑ N X i ] = E [ N ] E [ X 1 ]

E [ ∑ i = 1 N X i ] = ∑ n Pr ( N = n ) E [ ∑ i = 1 n X i ] = ∑ n Pr ( N = n ) ∑ i = 1 n E [ X i ] = ∑ n Pr ( N = n ) n E [ X 1 ] = E [ X 1 ] ∑ n n Pr ( N = n ) = E [ X 1 ] E [ N ] \begin{aligned}

\mathbb{E}\left[ \sum_{i=1}^N X_i \right] &= \sum_{n} \Pr(N = n) \mathbb{E}\left[ \sum_{i=1}^n X_i \right]\\

&= \sum_{n} \Pr(N = n) \sum_{i=1}^n \mathbb{E}\left[ X_i \right]\\

&= \sum_{n} \Pr(N = n) n \mathbb{E}\left[ X_1 \right]\\

&= \mathbb{E}[X_1] \sum_n n \Pr(N = n)\\

&= \mathbb{E}[X_1] \mathbb{E}[N]

\end{aligned}

E [ i = 1 ∑ N X i ] = n ∑ Pr ( N = n ) E [ i = 1 ∑ n X i ] = n ∑ Pr ( N = n ) i = 1 ∑ n E [ X i ] = n ∑ Pr ( N = n ) n E [ X 1 ] = E [ X 1 ] n ∑ n Pr ( N = n ) = E [ X 1 ] E [ N ]

条件期望

随机变量 X X X A A A 条件期望 (conditional expectation)定义为

E [ X ∣ A ] = ∑ x x Pr [ X = x ∣ A ] \mathbb{E}[X \mid A] = \sum_{x} x \Pr[X = x \mid A]

E [ X ∣ A ] = x ∑ x Pr [ X = x ∣ A ]

其中假设 Pr ( A ) > 0 \Pr(A) > 0 Pr ( A ) > 0

条件分布

随机变量 X X X A A A p X ∣ A : Z → [ 0 , 1 ] p_{X \mid A}\colon \Z \to [0, 1] p X ∣ A : Z → [ 0 , 1 ]

p X ∣ A ( x ) = Pr [ X = x ∣ A ] p_{X \mid A}(x) = \Pr[X = x \mid A]

p X ∣ A ( x ) = Pr [ X = x ∣ A ]

( X ∣ A ) (X \mid A) ( X ∣ A ) pmf p X ∣ A p_{X \mid A} p X ∣ A

条件期望 E [ X ∣ A ] \mathbb{E}[X \mid A] E [ X ∣ A ] ( X ∣ A ) (X \mid A) ( X ∣ A )

全期望法则(Law of Total Expectation)

全期望法则

X X X E [ X ] \mathbb{E}[X] E [ X ]

令事件 B 1 , … , B n B_1, \dots, B_n B 1 , … , B n Ω \Omega Ω Pr ( B i ) > 0 \Pr(B_i) > 0 Pr ( B i ) > 0

E [ X ] = ∑ i = 1 n E [ X ∣ B i ] Pr ( B i ) \mathbb{E}[X] = \sum_{i=1}^{n}\mathbb{E}[X \mid B_i]\Pr(B_i)

E [ X ] = i = 1 ∑ n E [ X ∣ B i ] Pr ( B i )

证明

E [ X ] = ∑ x x Pr [ X = x ] = ∑ x ∑ i = 1 n Pr [ X = x ∣ B i ] Pr ( B i ) = ∑ i = 1 n Pr ( B i ) ∑ x x Pr [ X = x ∣ B i ] = ∑ i = 1 n E [ X ∣ B i ] Pr ( B i ) \begin{aligned}

\mathbb{E}[X] &= \sum_x x \Pr[X = x]\\

&= \sum_x \sum_{i=1}^{n} \Pr[X = x \mid B_i] \Pr(B_i)\\

&= \sum_{i=1}^{n} \Pr(B_i) \sum_x x \Pr[X = x \mid B_i]\\

&= \sum_{i=1}^{n} \mathbb{E}[X \mid B_i]\Pr(B_i)

\end{aligned}

E [ X ] = x ∑ x Pr [ X = x ] = x ∑ i = 1 ∑ n Pr [ X = x ∣ B i ] Pr ( B i ) = i = 1 ∑ n Pr ( B i ) x ∑ x Pr [ X = x ∣ B i ] = i = 1 ∑ n E [ X ∣ B i ] Pr ( B i )

全概率法则实际上就是 X = I ( A ) X = I(A) X = I ( A )

E [ E [ X ∣ Y ] ] = E [ X ] \mathbb{E}[\mathbb{E}[X \mid Y]] = \mathbb{E}[X]

E [ E [ X ∣ Y ]] = E [ X ]

证明

E [ E [ X ∣ Y ] ] = ∑ y E [ X ∣ Y = y ] Pr ( Y = y ) (定义) = E [ X ] (全期望法则) \begin{aligned}

\mathbb{E}[\mathbb{E}[X \mid Y]] &= \sum_y \mathbb{E}[X \mid Y = y]\Pr(Y = y) &\text{(定义)}\\

&= \mathbb{E}[X] &\text{(全期望法则)}\\

\end{aligned}

E [ E [ X ∣ Y ]] = y ∑ E [ X ∣ Y = y ] Pr ( Y = y ) = E [ X ] (定义) (全期望法则)

快排分析

1

2

3

4

5

6

7

QSort ( A ): an array A of n distinct entries if n > 1 choose a pivot x = A [ 1 ] partition A into L with all entries < x , and R with all entries > x QSort ( L ) and QSort ( R )

最差时间复杂度为 O ( n 2 ) O(n^2) O ( n 2 )

令 t ( n ) = E [ X n ] t(n) = \mathbb{E}[X_n] t ( n ) = E [ X n ] X n X_n X n QSort(A) 中的比较次数,其中 A 是有 n n n

记 B i B_i B i A[1] 是 A 中第 i i i

t ( n ) = E [ X n ] = ∑ i = 1 n E [ X n ∣ B i ] Pr ( B i ) = 1 n ∑ i = 1 n E [ n − 1 + X i − 1 + X n − i ] = n − 1 + 2 n ∑ i = 0 n − 1 t ( i ) \begin{aligned}

t(n) &= \mathbb{E}[X_n]\\

&= \sum_{i=1}^{n} \mathbb{E}[X_n \mid B_i] \Pr(B_i)\\

&= \dfrac{1}{n} \sum_{i=1}^{n} \mathbb{E}[n-1 + X_{i-1} + X_{n-i}]\\

&= n-1 + \dfrac{2}{n} \sum_{i=0}^{n-1}t(i)

\end{aligned}

t ( n ) = E [ X n ] = i = 1 ∑ n E [ X n ∣ B i ] Pr ( B i ) = n 1 i = 1 ∑ n E [ n − 1 + X i − 1 + X n − i ] = n − 1 + n 2 i = 0 ∑ n − 1 t ( i )

其中 t ( 0 ) = t ( 1 ) = 0 t(0) = t(1) = 0 t ( 0 ) = t ( 1 ) = 0

还可以使用期望的线性性质。

对于均匀随机输入,A 是一个 a 1 < ⋯ < a n a_1 < \dots < a_n a 1 < ⋯ < a n

令 X i j ∈ { 0 , 1 } X_{ij} \in \left\lbrace 0, 1 \right\rbrace X ij ∈ { 0 , 1 } a i , a j a_i, a_{j} a i , a j QSort(A) 中进行过比较。

注意到

任意对 a i , a j a_i, a_{j} a i , a j

从而有 X = ∑ i < j X i j X = \sum\limits_{i < j} X_{ij} X = i < j ∑ X ij

若 a i , a j a_i, a_{j} a i , a j i < k < j i < k < j i < k < j a k a_{k} a k a i , a j a_i, a_{j} a i , a j

从而有 E [ X i j ] = Pr [ a i , a j compared ] = Pr [ { a i , a j } ∣ { a i , … , a j } ] = 2 j − i + 1 \mathbb{E}[X_{ij}] = \Pr[a_i, a_j \text{ compared}] = \Pr[\left\lbrace a_i, a_{j} \right\rbrace \mid \left\lbrace a_i, \dots, a_{j} \right\rbrace] = \dfrac{2}{j-i+1} E [ X ij ] = Pr [ a i , a j compared ] = Pr [ { a i , a j } ∣ { a i , … , a j } ] = j − i + 1 2

于是

E [ X ] = ∑ i < j E [ X i j ] = ∑ i < j 2 j − i + 1 = ∑ i = 1 n ∑ k = 2 n − i + 1 2 k ⩽ 2 ∑ i = 1 n ∑ k = 1 n 1 k = 2 n H ( n ) = 2 n ln n + O ( n ) \begin{aligned}

\mathbb{E}[X] &= \sum_{i < j}\mathbb{E}[X_{ij}]\\

&= \sum_{i < j}\dfrac{2}{j-i+1}\\

&= \sum_{i=1}^{n}\sum_{k=2}^{n-i+1}\dfrac{2}{k}\\

&\le 2 \sum_{i=1}^{n} \sum_{k=1}^{n} \dfrac{1}{k}\\

&= 2 n H(n)\\

&= 2 n \ln n + O(n)

\end{aligned}

E [ X ] = i < j ∑ E [ X ij ] = i < j ∑ j − i + 1 2 = i = 1 ∑ n k = 2 ∑ n − i + 1 k 2 ⩽ 2 i = 1 ∑ n k = 1 ∑ n k 1 = 2 n H ( n ) = 2 n ln n + O ( n )

Random Family Tree (随机家谱树)

X 0 , X 1 , X 2 , … X_0, X_1, X_2, \dots X 0 , X 1 , X 2 , …

{ X 0 = 1 X n + 1 = ∑ j = 1 X n ξ j ( n ) \left\lbrace\begin{aligned}

X_0 &= 1\\

X_{n+1} &= \sum_{j=1}^{X_n}\xi_{j}^{(n)}

\end{aligned}\right.

⎩ ⎨ ⎧ X 0 X n + 1 = 1 = j = 1 ∑ X n ξ j ( n )

其中 ξ j ( n ) ∈ Z ⩾ 0 \xi_{j}^{(n)} \in \Z_{\ge 0} ξ j ( n ) ∈ Z ⩾ 0 μ = E [ ξ j ( n ) ] \mu = \mathbb{E}[\xi_{j}^{(n)}] μ = E [ ξ j ( n ) ]

X 0 = 1 X_0 = 1 X 0 = 1 E [ X 1 ] = E [ ξ 1 ( 0 ) ] = μ \mathbb{E}[X_1] = \mathbb{E}[\xi_1^{(0)}] = \mu E [ X 1 ] = E [ ξ 1 ( 0 ) ] = μ

对后面有

E [ X n ∣ X n − 1 = k ] = E [ ∑ j = 1 k ξ j ( n − 1 ) ∣ X n − 1 = k ] = k μ \begin{aligned}

\mathbb{E}[X_n \mid X_{n-1} = k] &= \mathbb{E}\left[\sum_{j=1}^{k}\xi_{j}^{(n-1)} \biggm| X_{n-1} = k\right]\\

&= k \mu

\end{aligned}

E [ X n ∣ X n − 1 = k ] = E [ j = 1 ∑ k ξ j ( n − 1 ) X n − 1 = k ] = k μ

于是 E [ X n ∣ X n − 1 ] = X n − 1 μ \mathbb{E}[X_n \mid X_{n-1}] = X_{n-1}\mu E [ X n ∣ X n − 1 ] = X n − 1 μ

从而

E [ X n ] = E [ E [ X n ∣ X n − 1 ] ] = E [ X n − 1 μ ] = μ E [ X n − 1 ] = μ n \begin{aligned}

\mathbb{E}[X_n] &= \mathbb{E}[\mathbb{E}[X_n \mid X_{n-1}]]\\

&= \mathbb{E}[X_{n-1}\mu]\\

&= \mu \mathbb{E}[X_{n-1}]\\

&= \mu^n

\end{aligned}

E [ X n ] = E [ E [ X n ∣ X n − 1 ]] = E [ X n − 1 μ ] = μ E [ X n − 1 ] = μ n

于是有

E [ ∑ n ⩾ 0 X n ] = ∑ n ⩾ 0 E [ X n ] = ∑ n ⩾ 0 μ n = { 1 1 − μ if 0 < μ < 1 ∞ if μ ⩾ 1 \begin{aligned}

\mathbb{E}\left[ \sum_{n\ge 0} X_n \right] &= \sum_{n\ge 0} \mathbb{E}[X_n]\\

&= \sum_{n\ge 0} \mu^n\\

&= \begin{cases}

\dfrac{1}{1-\mu} & \text{if } 0 < \mu < 1\\

\infty & \text{if } \mu \ge 1

\end{cases}

\end{aligned}

E [ n ⩾ 0 ∑ X n ] = n ⩾ 0 ∑ E [ X n ] = n ⩾ 0 ∑ μ n = ⎩ ⎨ ⎧ 1 − μ 1 ∞ if 0 < μ < 1 if μ ⩾ 1

琴生不等式(Jensen's Inequality)

对于一般的(非线性的)函数 f ( X ) f(X) f ( X ) X X X E [ f ( X ) ] = f ( E [ X ] ) \mathbb{E}[f(X)] = f(\mathbb{E}[X]) E [ f ( X )] = f ( E [ X ])

但若 f f f

琴生不等式(Jensen's Inequality)

若 f f f 凸 (convex)函数,等价于 f ( λ x + ( 1 − λ ) y ) ⩽ λ f ( x ) + ( 1 − λ ) f ( y ) f(\lambda x + (1 - \lambda)y) \le \lambda f(x) + (1-\lambda)f(y) f ( λ x + ( 1 − λ ) y ) ⩽ λ f ( x ) + ( 1 − λ ) f ( y ) X X X E [ f ( X ) ] ⩾ f ( E [ X ] ) \mathbb{E}[f(X)] \ge f(\mathbb{E}[X]) E [ f ( X )] ⩾ f ( E [ X ])

若 f f f 凹 (concave)函数,等价于 f ( λ x + ( 1 − λ ) y ) ⩾ λ f ( x ) + ( 1 − λ ) f ( y ) f(\lambda x + (1 - \lambda)y) \ge \lambda f(x) + (1-\lambda)f(y) f ( λ x + ( 1 − λ ) y ) ⩾ λ f ( x ) + ( 1 − λ ) f ( y ) X X X E [ f ( X ) ] ⩽ f ( E [ X ] ) \mathbb{E}[f(X)] \le f(\mathbb{E}[X]) E [ f ( X )] ⩽ f ( E [ X ])

期望的单调性(Monotonicity of Expectation)

对于随机变量 X , Y X, Y X , Y c ∈ R c \in \R c ∈ R

若 X ⩽ Y X \le Y X ⩽ Y E [ X ] ⩽ E [ Y ] \mathbb{E}[X] \le \mathbb{E}[Y] E [ X ] ⩽ E [ Y ]

若 X ⩽ c X \le c X ⩽ c X ⩾ c X \ge c X ⩾ c E [ X ] ⩽ E [ c ] \mathbb{E}[X] \le \mathbb{E}[c] E [ X ] ⩽ E [ c ] E [ X ] ⩾ E [ c ] \mathbb{E}[X] \ge \mathbb{E}[c] E [ X ] ⩾ E [ c ]

E [ ∣ X ∣ ] ⩾ ∣ E [ X ] ∣ ⩾ 0 \mathbb{E}[|X|] \ge | \mathbb{E}[X] | \ge 0 E [ ∣ X ∣ ] ⩾ ∣ E [ X ] ∣ ⩾ 0

第一点的证明

E [ X ] = ∑ x x Pr ( X = x ) = ∑ x x ∑ y Pr [ ( X , Y ) = ( x , y ) ] = ∑ x x ∑ y ⩾ x Pr [ ( X , Y ) = ( x , y ) ] = ∑ y ∑ x ⩽ y x Pr [ ( X , Y ) = ( x , y ) ] ⩽ ∑ y ∑ x ⩽ y y Pr [ ( X , Y ) = ( x , y ) ] ⩽ ∑ y y Pr ( Y = y ) = E [ Y ] \begin{aligned}

\mathbb{E}[X] & =\sum_{x} x \Pr(X=x)\\ &=\sum_{x} x \sum_{y} \Pr[(X, Y)=(x, y)] \\

& =\sum_{x} x \sum_{y \ge x} \Pr[(X, Y)=(x, y)]\\

&=\sum_{y} \sum_{x \le y} x \Pr[(X, Y)=(x, y)] \\

& \le \sum_{y} \sum_{x \le y} y \Pr[(X, Y)=(x, y)] \\

&\le \sum_{y} y\Pr(Y=y)\\

&=\mathbb{E}[Y]

\end{aligned}

E [ X ] = x ∑ x Pr ( X = x ) = x ∑ x y ∑ Pr [( X , Y ) = ( x , y )] = x ∑ x y ⩾ x ∑ Pr [( X , Y ) = ( x , y )] = y ∑ x ⩽ y ∑ x Pr [( X , Y ) = ( x , y )] ⩽ y ∑ x ⩽ y ∑ y Pr [( X , Y ) = ( x , y )] ⩽ y ∑ y Pr ( Y = y ) = E [ Y ]

平均法则(Average Principle)

Pr ( X ⩾ E [ X ] ) > 0 \Pr(X \ge \mathbb{E}[X]) > 0 Pr ( X ⩾ E [ X ]) > 0

若 Pr ( X < c ) = 1 \Pr(X < c) = 1 Pr ( X < c ) = 1 E [ X ] < c \mathbb{E}[X] < c E [ X ] < c

Pr ( X ⩽ E [ X ] ) > 0 \Pr(X \le \mathbb{E}[X]) > 0 Pr ( X ⩽ E [ X ]) > 0

若 Pr ( X > c ) = 1 \Pr(X > c) = 1 Pr ( X > c ) = 1 E [ X ] > c \mathbb{E}[X] > c E [ X ] > c

根据概率法 (probabilistic method):

∃ ω ∈ Ω s.t. X ( ω ) ⩾ E [ X ] \exists \omega \in \Omega\quad \text{s.t.}\quad X(\omega) \ge \mathbb{E}[X] ∃ ω ∈ Ω s.t. X ( ω ) ⩾ E [ X ] ∃ ω ∈ Ω s.t. X ( ω ) ⩽ E [ X ] \exists \omega \in \Omega\quad \text{s.t.}\quad X(\omega) \le \mathbb{E}[X] ∃ ω ∈ Ω s.t. X ( ω ) ⩽ E [ X ]

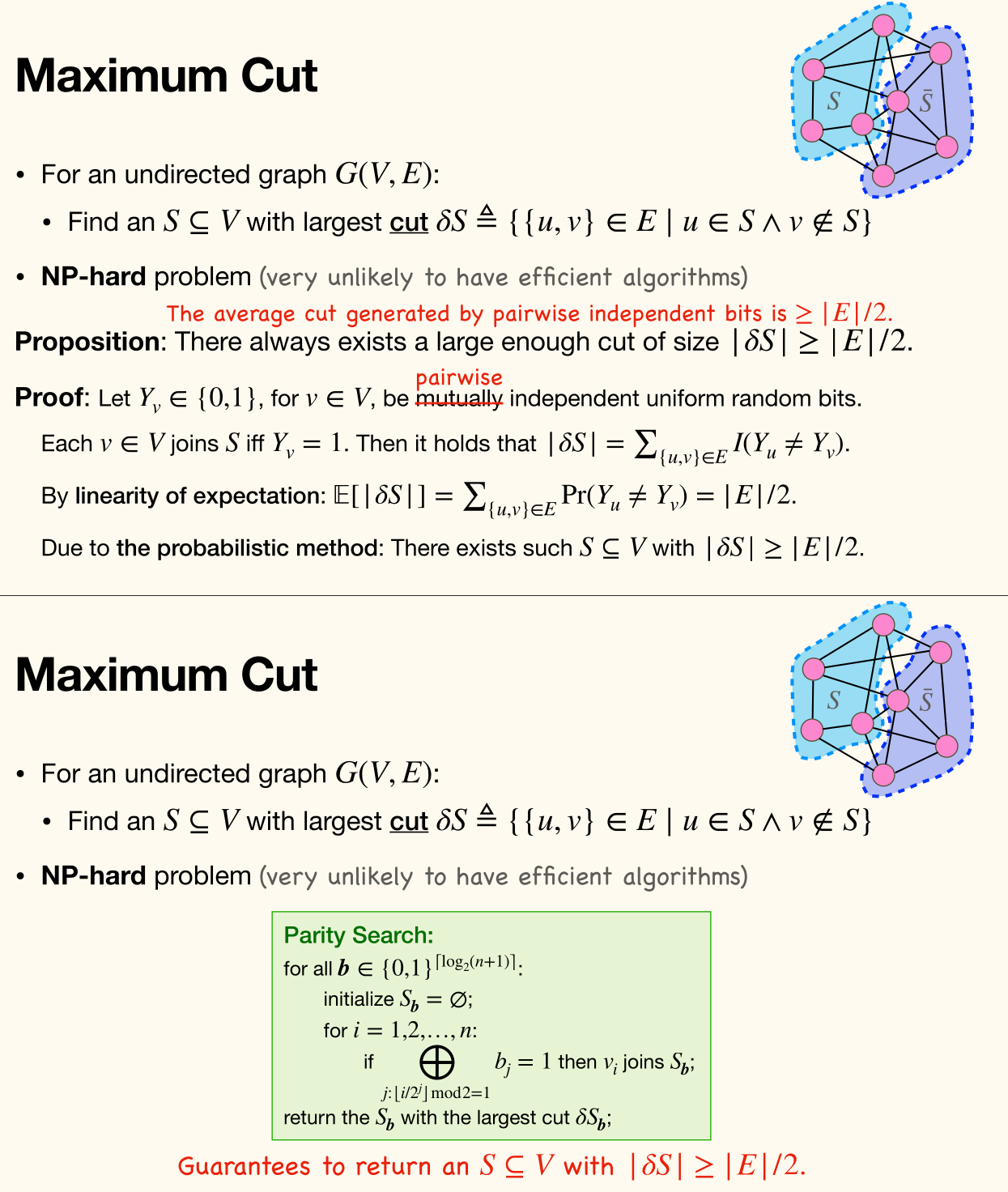

最大割(Maximum Cut)

对于一个无向图(undirected graph)G = ( V , E ) G = (V, E) G = ( V , E ) V = S ∪ S ˉ V = S \cup \bar{S} V = S ∪ S ˉ 割集 (cut set)δ S = { ( u , v ) ∈ E ∣ u ∈ S , v ∈ S ˉ } \delta S = \left\lbrace (u, v) \in E \mid u \in S, v \in \bar{S} \right\rbrace δ S = { ( u , v ) ∈ E ∣ u ∈ S , v ∈ S ˉ }

这是一个 NP 难问题。

始终存在一个割集,其大小至少为边集大小的一半,即

∣ δ S ∣ ⩾ 1 2 ∣ E ∣ |\delta S| \ge \dfrac{1}{2} |E|

∣ δ S ∣ ⩾ 2 1 ∣ E ∣

证明

令 Y v ∈ { 0 , 1 } Y_v \in \left\lbrace 0, 1 \right\rbrace Y v ∈ { 0 , 1 } v v v S S S

于是

∣ δ S ∣ = ∑ ( u , v ) ∈ E I ( Y u ≠ Y v ) |\delta S| = \sum_{(u, v) \in E} I(Y_u \ne Y_v)

∣ δ S ∣ = ( u , v ) ∈ E ∑ I ( Y u = Y v )

由期望的线性性质有

E [ ∣ δ S ∣ ] = ∑ ( u , v ) ∈ E E [ I ( Y u ≠ Y v ) ] = ∑ ( u , v ) ∈ E Pr ( Y u ≠ Y v ) = ∑ ( u , v ) ∈ E 1 2 = 1 2 ∣ E ∣ \begin{aligned}

\mathbb{E}[|\delta S|] &= \sum_{(u, v) \in E} \mathbb{E}[I(Y_u \ne Y_v)]\\

&= \sum_{(u, v) \in E} \Pr(Y_u \ne Y_v)\\

&= \sum_{(u, v) \in E} \dfrac{1}{2}\\

&= \dfrac{1}{2} |E|

\end{aligned}

E [ ∣ δ S ∣ ] = ( u , v ) ∈ E ∑ E [ I ( Y u = Y v )] = ( u , v ) ∈ E ∑ Pr ( Y u = Y v ) = ( u , v ) ∈ E ∑ 2 1 = 2 1 ∣ E ∣

概率法有,存在这样的 S ⊆ V S \subseteq V S ⊆ V ∣ δ S ∣ ⩾ 1 2 ∣ E ∣ |\delta S| \ge \dfrac{1}{2} |E| ∣ δ S ∣ ⩾ 2 1 ∣ E ∣